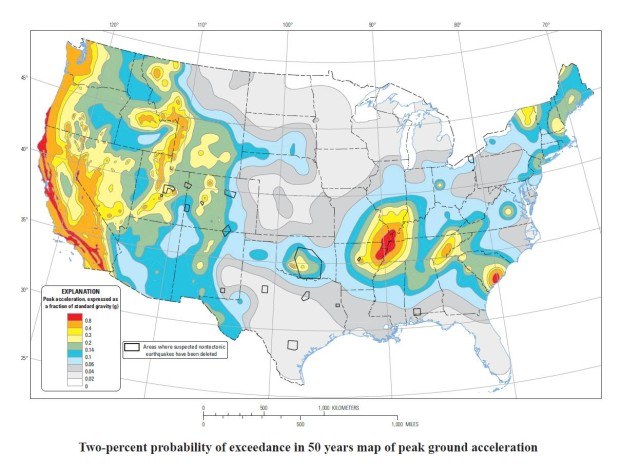

Every six years the U.S. Geological Survey (USGS) produces an updated set of seismic hazard maps for the United States. These National Hazard Maps show the estimated probabilities of different levels of ground shaking by location, and the maps are used by engineers for building and other structural designs in earthquake-prone areas. The maps also provide guidance for local building codes and inform the earthquake catastrophe models.

Executive Summary

In a 2014 report on earthquake forecasting, “The Third Uniform California Earthquake Rupture Forecast (UCERF3),” U.S. Geological Survey scientists and collaborating partners in southern California recognize the possibilities of multi-fault ruptures and backgroundevents, where there are no known faults and historical events. While the larger magnitude multi-fault scenarios are the most significant methodological change from a scientific perspective, it is the background events, which can happen anywhere, that have more implications for insurers, according to risk expert Karen Clark.The most recent report was released in 2014 and will eventually provide the basis for all of the U.S. earthquake models. Because of added complexities, it’s taking the modeling companies longer than usual to incorporate the updated information. This article explains the new report, why it’s more complex and the most important implications for senior insurance executives.

The first thing for decision-makers to understand is that when the maps are updated, it doesn’t mean there are radical new theories or significant changes in the scientific understanding of earthquakes. Rather, the updated maps are “snapshots” of the ongoing research in earthquake hazard at particular points in time. The maps have been updated every several years with the most current research at the time, and more scientists are contributing to successive reports, which means a wider range of expert opinion (and wider uncertainty) is being accounted for.

Second, the seismic hazard maps cannot be directly incorporated into the catastrophe models because the USGS methodology doesn’t produce earthquake “events” as required by insurers and reinsurers. The maps show the likelihood of different levels of ground motion at specific locations as required by design engineers. Catastrophe modelers analyze the data underlying the maps and use that information to develop their own proprietary catalogs of events, which is why the various models will produce different results even when they’re all based on the same report.

Third, and most importantly, the most significant update in the 2014 report is better recognition of the wide uncertainty around modeling future earthquakes. As opposed to knowing a lot more, the new report better reflects what scientists don’t know about where and how frequently large magnitude events will occur.

While scientists generally understand the geological mechanisms generating earthquake events, there are earthquake sources—i.e., faults—that are unmapped and unknown to the scientific community. Actual events are frequently surprises in terms of location, magnitude and resulting damage. The last major California earthquake (Northridge) surprised scientists because it occurred on a previously unknown fault. The 2011 magnitude 9 Tohoku and the 2012 magnitude 8.6 Sumatra events demonstrated that earthquakes can rupture beyond previously understood fault boundaries, resulting in larger rupture areas and magnitudes than expected by the scientific community. The new report addresses these issues and better recognizes the uncertainty in the locations and magnitudes of future events.

While scientists generally understand the geological mechanisms generating earthquake events, there are earthquake sources—i.e., faults—that are unmapped and unknown to the scientific community. Actual events are frequently surprises in terms of location, magnitude and resulting damage. The last major California earthquake (Northridge) surprised scientists because it occurred on a previously unknown fault. The 2011 magnitude 9 Tohoku and the 2012 magnitude 8.6 Sumatra events demonstrated that earthquakes can rupture beyond previously understood fault boundaries, resulting in larger rupture areas and magnitudes than expected by the scientific community. The new report addresses these issues and better recognizes the uncertainty in the locations and magnitudes of future events.

Background on How Earthquakes Are Modeled

In order to estimate your loss potential from future earthquakes, estimates of the frequencies of events of different magnitudes are required. For example, how likely is a magnitude 7.5 event in California versus the New Madrid seismic zone? How likely is this event in northern versus southern California? How likely is a magnitude 8 earthquake in different regions?

Source: USGS Glossary at http://earthquake.usgs.gov

The historical data alone is not sufficient to answer these questions because the return periods of large magnitude events are usually in the hundreds or even thousands of years. To supplement the historical record, scientists use additional sources of information such as fault slip rates and paleoseismicity data to develop estimates of the frequency-magnitude relationships of future events.

For seismically active regions and known faults, fault movements (distance and direction) are measured using instrumentation and GPS. This data informs scientists of the potential stress buildup over time and therefore the likely magnitudes of future earthquakes. For major faults, such as the Hayward and San Andreas, scientists have conducted paleoseismicity studies that involve digging trenches to find evidence of prehistoric events. Sediment layers indicative of past events are carbon dated to inform scientific estimates of the likely return periods of major events.

Scientists must also account for seismicity that occurs in areas with no known faults and make assumptions for areas that haven’t experienced historical events but that could experience future events. This is known as “background” seismicity, and historically the frequency-magnitude relationships for background events have been estimated separately from the fault-based seismicity.

What’s New in the 2014 Report

The USGS report contains updated information on earthquake hazard that includes the seismicity assumptions discussed above and the assumptions on the ground motion that will result from future earthquakes. The new report has updated assumptions in all regions of the U.S.

For regions outside of California and the Pacific Northwest, the impacts of the updates are small. In the Pacific Northwest, the hazard has increased in the southern portion of the Cascadia Subduction Zone due to new magnitude and rupture geometry assumptions.

The most significant methodological change is in California because the historical approach of estimating the frequency-magnitude relationships for individual faults and fault segments separately resulted in a problem for scientists. When all of the assumptions were put together, the implied frequency of moderate events—magnitude 6.5 to 7.5—for all of California was significantly higher than observed in the historical record.

This presented an interesting conundrum to scientists: Was the historical record a unique period with fewer than expected moderate earthquakes, or was the higher frequency an artifact of the scientific methodology?

Over the past several years, a group of scientists in southern California put together a new methodology called UCERF3 (Third Uniform California Earthquake Rupture Forecast). The new model recognizes that faults are not separate but instead are interconnected in “fault systems.” Within fault systems, multiple nearby faults can rupture simultaneously creating larger magnitude events than the individual faults could produce on their own (because magnitude is a function of rupture area).

The new fault-system approach for estimating the rates of future earthquakes is called the “grand inversion,” and it solves the longstanding problem of overpredicting moderate magnitude events in California. It does this by generating more events of magnitude 8 and larger to consume more of the seismic moment budget previously consumed by the moderate events. So, according to the new model, more of the energy released over time by earthquakes will be from larger magnitude events.

There’s a greater increase in larger earthquakes in southern California, according to UCERF3, because this region has more faults that can host multi-fault ruptures. UCERF3 agrees with previous studies that the southern San Andreas is the most likely fault to host a large earthquake.

The new UCERF3 model is more complex than previous models because it accounts for many more rupture scenarios. If the catastrophe modelers attempt to implement the grand inversion directly, it’s problematic because it will result in millions of events. The UCERF3 group is not generating catalogs of earthquake events but rather estimating the probabilities of different levels of ground motion by location. Even for this more limited purpose, the scientists required a lot of time and supercomputing capabilities.

But how important are these millions of new scenarios to insurance executives who are concerned about earthquake losses?

These new large magnitude scenarios have return periods of 1,000 years or longer, and their most likely locations are in the less populated areas of California. These new scenarios are not the ones driving the largest losses.

A Pragmatic Approach to Earthquake Loss Estimation

Even with millions of simulated scenarios, UCERF3 is still an approximation of the earthquake hazard. There’s still a lot of uncertainty and a good chance the next major event in California will not be one of the UCERF3 events.

What’s less highlighted in the report but more important to insurance executives are the updated probabilities of the more frequent background events. According to the report, an approximately 100-year return period event in California is a magnitude 7. Because this is a background event—accounting for unknown faults—it can happen anywhere, including in the most densely populated areas of Los Angeles.

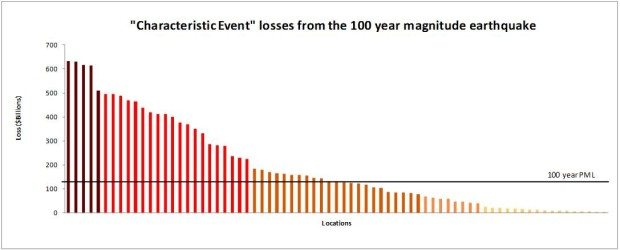

According to research conducted by Karen Clark & Co. (KCC), the largest loss (including insured and uninsured properties) from a magnitude 8 event on the southern San Andreas fault—which is to the east of Los Angeles and the most likely location for this size event—is $328 billion. The losses from a magnitude 7 earthquake near downtown Los Angeles would likely be almost twice that amount—over $600 billion. The accompanying graph shows the estimated total losses from a magnitude 7 event occurring in different parts of the Los Angeles area.

More than half of the 100-year event scenarios would cause a loss greater than the 100-year PML in Los Angeles, estimated to be approximately $125 billion. While the PML is a good average measure of large loss potential, actual events will likely cause losses well below or well in excess of this number.

This analysis clearly shows catastrophes are like real estate—it’s all about location. While scientists are interested in where the largest magnitude events can occur, insurers need to focus on where they have exposure concentrations that could lead to surprise and potentially solvency-impairing losses, even from moderate-size events.

The Implications for Decision-Makers

Much of the scientific research on earthquakes is focused on known faults and seismic sources. But scientists are now explicitly recognizing that earthquakes can rupture beyond known faults and that major events can occur far from mapped faults. Insurers need to factor the wide uncertainty on the locations of future events into risk management strategies.

The traditional Exceedance Probability (EP) curve metrics, such as PMLs, don’t help insurers identify where they can have surprise and outsized losses from background events. The newer Characteristic Event (CE) method is more suitable for this purpose. (See related article, “What Boards Would Like to Know About Catastrophe Losses, Carrier Management, Q12015 edition, page 50, also available online at carriermag.com/4mepbis.) Utilizing information directly from the new USGS report, insurers can see where they have “hot spots” and therefore better manage their future earthquake losses.

Executives on the Move: New Claims Officers at Chubb, RLI

Executives on the Move: New Claims Officers at Chubb, RLI  Reinsurers Least Successful Acquirers in Industry M&A: Analysis

Reinsurers Least Successful Acquirers in Industry M&A: Analysis  Deep or Shallow? Previewing the 2026 Soft Market

Deep or Shallow? Previewing the 2026 Soft Market  Strong El Nino, Warmer Sea Impacts Atlantic Hurricane Season Forecasts

Strong El Nino, Warmer Sea Impacts Atlantic Hurricane Season Forecasts