Two experts on autonomous vehicle safety recently dove into legal arguments and engineering factors that shaped last week’s landmark jury decision against Tesla, at one point surfacing an important takeaway about insurance for Tesla drivers.

“Your insurance company most likely is going to ‘total loss’ this car. You want to say to your insurance company, ‘I want this car put on hold until we’ve figured out what happened,'” said Mike Nelson, a partner in the law firm Nelson Law, LLC, during a webinar Monday, advising Tesla owners who might at some point be involved in serious accident situations like George McGee, the Tesla driver in the Benavides v. Tesla case.

A Florida jury last week found that Tesla was 33 percent liable for an accident that killed a woman and injured her boyfriend in 2019. The two were standing next to their Chevy Tahoe when McGee’s Tesla Model S, operating in Autopilot mode, went through a T-intersection and rammed into the parked Chevy.

Related articles: Jury Decides Tesla Partly Responsible in Autopilot Crash Case; Must Pay $243M; Tesla Must Pay $243 Million Over Fatal Autopilot Crash; Tesla Fails to Quash Florida Lawsuit Over Fatal Model S Crash; Florida Lawsuit Over Student Death While Stargazing Focuses on Tesla’s Autopilot System

The jury verdict and award holding Tesla liable for $42.5 million in compensatory damages and $200 million in punitive damages, which came in spite of the fact that McGee admitted being distracted at the time of the accident, represent a departure from past legal challenges in which Tesla’s efforts to put the full blame on drivers in crashes won out.

During the webinar, Nelson offered his advice about preserving evidence in more typical cases where drivers fully blame their cars’ automated features for harmful consequences, in reaction to reports suggesting that in the Benavides case, Tesla had deleted some vehicle data from the moments leading up to the accident—and then denied ever having it.

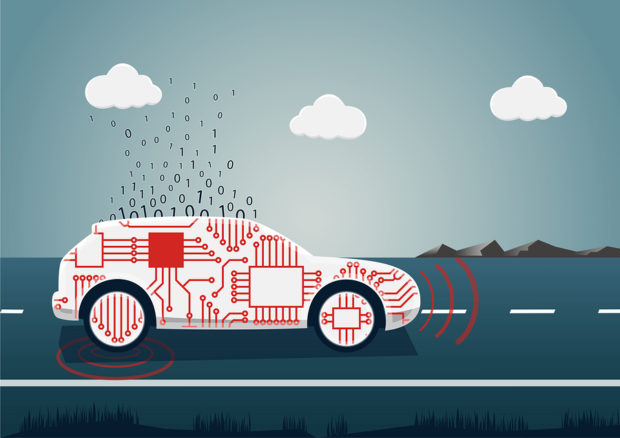

Co-panelist Philip Koopman, faculty emeritus at Carnegie Mellon University, an engineer who specializes in design reviews of embedded software in self-driving cars and other products, explained how the plaintiffs’ engineering expert in the Benavides case might have unearthed the deleted information. Without direct knowledge, but basing his discussion on his own participation in similar exercises, Koopman noted that when a Tesla crashes, “it phones home after the crash and sends home some of its operating data,” transmitting information about whether Autopilot was on or off, whether the driver moved the steering wheel, whether the brakes were engaged, and other vehicle information back to Tesla, filling a massive spreadsheet. “That data gets sent via cellphone-data-link up to Tesla, and they keep it on their servers. And it’s really common in these cases for the plaintiffs to get hold of that spreadsheet and do some analysis on data,” Koopman reported.

“But there’s a second place you can get the data” when that’s not available, as in the situation when transmitted data is erased. There is “a tiny SD card”—similar to the removable memory cards found in digital cameras—located inside the car’s main computer that has even more information.

“It’s not an SD card the occupant has access to. You have to go behind the dashboard to get it. But you can pull out this SD card and it has [a] full set of data,” which can extend all the way back to the day the car was purchased. “It’s a proprietary format, [and] it’s super painful to extract that data. But you certainly can do it,” Koopman said, speculating that this is what the plaintiffs’ expert did in the Benavides case.

“So, for anyone in the audience, if you have a Tesla crash that’s a big deal, make sure nobody touches the computer. And make sure you get forensic chain of custody on that SD card inside your computer. That’s your backup in case Tesla mysteriously loses your data.”

Turning his attention to the wrinkle about an insurance company totaling a vehicle after an accident, Nelson said, “I have had these situations where an insurance company sold the salvage. [The] parts were strewn all over North America.”

Nelson, in addition to being a partner in the law firm Nelson Law, LLC, which offers litigation and risk management services related to emerging mobility technology, is also the founder of the Quantiv Risk, a technology company that uses vehicle performance data to uncover objective views of vehicle crashes. Like Koopman, he was not involved in the Benavides case, but through Quantiv Risk he has had experience with Tesla vehicle performance data spreadsheets, working to deliver such data in digestible formats to claims personnel at auto insurers and providing consulting services to help in adjudicating cases involving Tesla accidents. (Read more about Quantiv Risk, Tesla data and Nelson in the 2022 Carrier Management profile, “Lawyer-Turned InsurTech Founder Looks Into Cloud to Settle Tesla Claims“)

Related articles: Lawyer-Turned InsurTech Founder Looks Into Cloud to Settle Tesla Claims; Who Owns Tesla Vehicle Performance Data?; On the Highway: A Litigator’s View From the Driver’s Seat of a Tesla

Hot Take: Why the Jury Found Tesla Liable

While moderating the webinar, Nelson, a self-driving car enthusiast who has owned three Teslas in the past, noted the existence of polarizing views around human error vs. machine responsibility questions that lie at the heart of the Benavides case. But he repeatedly brought the audience back to one point of agreement: “What we can all rally around is the need for safer roads for ourselves, our families and the public at large. And mobility tech holds such promise to support these goals if it’s introduced properly and engineered with rigor and objectively evaluated.”

“Those are tall orders for the car manufacturers and the tech that goes in them. But it’s very important that we as a society make those moves as well because somehow we’re still losing 40,000 people a year, and that has to stop,” he said, referring to the high number of auto crash fatalities each year.

“What we can all rally around is the need for safer roads for ourselves, our families and the public at large. And mobility tech holds such promise to support these goals if it’s introduced properly and engineered with rigor and objectively evaluated.”

Mike Nelson, Nelson Law, LLC

While their hands-on knowledge of the extraction and interpretation of vehicle data in other situations served to inform their discussion of missing data in the Benavides case, neither Koopman nor Nelson suggested that the alleged deletion of data had inflamed the jury into returning a nuclear verdict. Instead they focused on factors like Tesla’s failure to stop drivers from using Autopilot on roads for which the feature was not designed and on inadequate driver monitoring as the issues which will need attention to prevent future harms.

As for what may have incited the jury, they also highlighted Tesla’s aggressive marketing and safety claims.

Without the benefit of trial transcripts to read yet, Koopman stressed that his commentary represented a “hot take.”

“When you find punitive damages, that’s a really big deal. It’s not close [where] somebody did a coin flick flip. It means that the jury was really, really convinced that there was bad stuff going on,” he said.

In the past, Tesla would rely on the argument that the driver was to blame—”the driver knew what they were doing. They signed up.”

Nelson noted, however, that the people entitled to this jury’s award were not even in the car. “They were not parties to the agreement that Tesla had with McGee… So, this is an unusual case in that people that are outside the contractual relationship between Tesla and the owner of the car were the ones that were suing,” he said, noting that McGee (and presumably his auto insurance company) had already settled claims with those plaintiffs in a separate action.

Koopman reiterated the point later. “The two victims, they didn’t sign up. They weren’t in the car. That may have a lot to do with the [size of the] verdict we saw.”

“The two victims, they didn’t sign up. They weren’t in the car. That may have a lot to do with the [size of the] verdict we saw.”

Koopman said he’s personally been waiting for the Benavides type of outcome, even in cases where the driver is the party suing a car maker. “The ironies of automation and automation complacency pretty much guarantee people will act” the way McGee did. The Tesla driver was reportedly searching around the floorboards of his car for a dropped cellphone, relying on Autopilot to pay attention while he was not.

“It’s not a question of moral fiber. It’s just part of the human condition.”

Related sidebar: Human Responses: Did Jurors React to Musk in Damage Award Against Tesla?

“The fact that a jury found that even though the driver had succumbed to these weaknesses in human nature, which are fully anticipated, that they were still going to blame Tesla anyway—that was huge,” he said, suggesting that Tesla’s “hype” around its tech may have played a part in the jury verdict

“The fact that a jury found that even though the driver had succumbed to these weaknesses in human nature, which are fully anticipated, that they were still going to blame Tesla anyway—that was huge,” he said, suggesting that Tesla’s “hype” around its tech may have played a part in the jury verdict

“They’ve been overstating the capability of their automation,” Koopman said, continuing to offer personal reactions from his outsider perspective. Reporting that Tesla statements about Autopilot’s ability to stop even if an alien spaceship suddenly dropped on a roadway came out at trial, the design engineer suggested that such marketing fuels drivers’ overconfidence in technology.

Fundamental Flaws

Nelson noted that Dr. Mary “Missy” Cummings, an engineering professor at George Mason University and a former safety advisor for the National Highway Traffic Safety Administration, served as an expert for plaintiffs during the Benavides trial. She testified that the Autopilot system is “fundamentally flawed” and should never have been used outside of its “operational design domain,” Nelson noted.

Koopman explained that when a system is designed, “you have a model of the world, and it’s supposed to be safe as long as you’re in that model.” If intersections are outside the ODD, you’re not supposed to drive on roads with intersections—just on limited access highways. “But the rub here is that this system is happy to let itself be turned on even when it’s outside its ODD.”

As a result, “people get seduced into trusting it….’It seems to work. It must be OK,'” they think.

Speaking from personal experience, having owned a Tesla 2018 model S, a Model 3 and a Model Y, Nelson reported being able to shift into a navigation mode that allowed the car to take him to his journey’s end, even if the vehicle had to travel outside its ODD.

“I live in a rural part of Pennsylvania, and once you get off a restricted access highway, Route 81, the car will take you off the off ramp and then it will put you on a secondary road. Then it’ll take you through two traffic lights, travel seven miles on a single lane road, and turn onto my roadway, which is dirt. And it will stay on a dirt road, no lane markings, for two miles until it gets to my driveway.”

“I never had to do anything special to do it…You just click the [steering] stalk to make it work.”

The system “never really said, ‘We’re not on a highway anymore. Do you want to keep going?’ It just kept going.”

While the owner’s manual may state the limitations, Koopman said that’s not enough. You can turn Autopilot on in “a completely unsafe place.”

“Tesla would say you’re supposed to know not to do that. But if it lets you do it, human nature says it must be OK.”

‘Predictable Misuse’ and Product Liability

Koopman and Nelson also discussed the alleged failure of Tesla to adequately warn a distracted driver, something they say other car companies accomplish via in-cabin cameras while Tesla has been using visible and audible hands-on-wheel warnings (and statements about the need to be attentive in its manuals). Nelson reported that Tesla has now quietly moved into the in-cabin camera camp, which wasn’t in operation in McGee’s car.

Together inadequate driver warnings and ODD issues suggest Tesla “failed to anticipate the ‘predictable misuse of the vehicle,'” Nelson said, repeating another point that plaintiffs’ expert Cummings raised at trial.

“Predictable misuse is a concept that’s recognized in product liability law. If a product can be misused and you didn’t warn of that, or you didn’t take safeguards to [account for] predictable misuse, you can be held strictly liable.”

“Predictable misuse is a concept that’s recognized in product liability law,” Nelson said. “If a product can be misused and you didn’t warn of that, or you didn’t take safeguards to make sure predictable misuse was [taken into account], you can be held strictly liable,” the lawyer said.

Koopman added, “If the car lets you play with your cellphone while you’re driving it, people are incentivized to do that. They’ve been told it drives safer than they do. So, there’s no harm…That’s how people are wired.”

Punishing the driver, he said, “is not going to stop the next person. You’re trying to deny human nature.”

Koopman also reacted to Nelson’s report on Tesla’s defense in the Benavides case, which involved Tesla lawyers saying that the crash was not preventable by any vehicle system in 2019, and that Autopilot performed as expected.

“You can have a system that is horribly dangerous, that works exactly in the horribly dangerous way you intended it to work. That doesn’t mean it’s safe,” Koopman said. “It’s a useful statement to say there are no software bugs that we know of,” the engineer agreed. “But if your human-computer interaction model was flawed—working exactly as intended according to a flawed model—that still results in a product that presents unreasonable risk,” he opined.

Related article: Human Responses: Did Jurors React to Musk in Damage Award Against Tesla?

How Leaders Can Maintain Humanity in the Modern Insurance Era

How Leaders Can Maintain Humanity in the Modern Insurance Era  More Than a Dozen U.S. States Consider Temporary Data Center Bans

More Than a Dozen U.S. States Consider Temporary Data Center Bans  Analysis: California’s Surplus Lines HO Market Driven by Access, Not Wildfire Risk

Analysis: California’s Surplus Lines HO Market Driven by Access, Not Wildfire Risk  NYC Mayor Eyes City-Run Insurance Program for Affordable Housing

NYC Mayor Eyes City-Run Insurance Program for Affordable Housing