Before manufacturers let autonomous vehicles loose on public roadways, they’ll have to show that their driverless cars are a lot better than humans at getting from Point A to Point B without crashing.

The partially autonomous vehicles already on the road have made dumb mistakes — some of them deadly — that give reason to question whether robots are ready to take the wheel. In the past year, several key advocates for autonomous technology downplayed expectations that fully autonomous cars will soon be on the road.

“We overestimated the arrival of autonomous vehicles,” Ford Chief Executive Officer Jim Hackett said in April to the Detroit Economic Club.

InsurTech investors are working to resolve at least two of numerous technical challenges that must be overcome before self-driving cars become reality. One is the propensity of unruly human pedestrians to get in harm’s way. The other is a frailty unique to machines that allows unauthorized users to take over control simply by transmitting the proper commands.

InsurTech investors are working to resolve at least two of numerous technical challenges that must be overcome before self-driving cars become reality. One is the propensity of unruly human pedestrians to get in harm’s way. The other is a frailty unique to machines that allows unauthorized users to take over control simply by transmitting the proper commands.

Unpredictable Pedestrians

Anthemis Group, a London-based investment house that finds funding for fintech and InsurTech startups, says its getting its hands around the first problem. Anthemis had added to its portfolio Humanising Autonomy, a startup founded by former students of Imperial College London. The company’s chief product is an analytic platform that predicts pedestrian behavior.

Matthew Jones, an Anthemis principal who oversees InsurTech investments, said the Humanising Autonomy product is similar to a plug-in. It interacts with other technologies, such as autonomous vehicles, through an application program interface. The product predicts what pedestrians will do next by using 90 attributes, such as eye direction, whether the person is using a cellphone and how close he or she is to the curb.

The platform can be used in autonomous vehicles to send alerts when it detects that a pedestrian is moving into the vehicle’s path. Jones said that predicting pedestrian behavior is one of the major challenges to operating vehicles autonomously.

“What we’ve seen is one of the major causes of autonomous vehicles being stopped are the pedestrians,” he said. “The pedestrians are so unpredictable.”

Jones said Humanising Autonomy can also be installed as a safety device in factories where robots work alongside humans.

But the potential as a component of autonomous vehicles or factory robots wasn’t the main attraction for Anthemis. Jones is a former Swiss Re executive. He said the platform’s potential to serve the insurance industry captured his attention.

Jones said he believes that autonomous vehicles will transform the insurance industry by virtually eliminating passenger and commercial automobile claims. Damages caused by autonomous cars are more likely to be pursued as product liability instead of conventional property damage claims, he said.

Jones saId he believes that manufacturers will likely sell insurance along with their vehicles as a package, perhaps as a subscription service. The continuous stream of data sent by autonomous vehicles will create a treasure trove of information for risk managers.

Jones said when that transformation begins, insurers need to be in a position to completely understand the technology.

“Aggregation of risk is going to significantly change over time,” he said. “By better understanding what is going to happen when humans and machines interact, we’re going to have a much better understanding of how claim frequency and claim severity its going to change over time as well.”

Death on the Highway

Several mishaps involving semi-autonomous vehicles—some of them deadly—give reason for pause.

Three motorists have died while operating Tesla vehicles in self-driving mode, which Tesla calls Autopilot. Tesla improved its system after a vehicle crashed into a semi-truck crossing a highway in 2016. But in 2018, a driver died when crashing into a median.

In March, a Tesla Model S collided with a semi-truck in Florida while on autopilot, killing the Tesla driver. Bloomberg reported that the National Highway Traffic Safety Administration has issued subpoenas to Tesla, perhaps presaging a formal investigation to determine if Autopilot has a safety defect.

There’s also been at least one pedestrian death. On March 18, 2018, 49-year-old Elaine Herzberg was killed when a self-driving Uber Technologies test vehicle ran into her as she pushed a bicycle across a four-lane highway in Tempe, Arizona.

There’s also been at least one pedestrian death. On March 18, 2018, 49-year-old Elaine Herzberg was killed when a self-driving Uber Technologies test vehicle ran into her as she pushed a bicycle across a four-lane highway in Tempe, Arizona.

A preliminary report by the National Transportation Safety Board determined that the Uber Volvo was in self-driving mode at the time of the crash and detected Herzberg in the crosswalk six seconds before impact. Uber told investigators that the auto-braking system is turned off when its test vehicles are in self-driving mode “to reduce the potential for erratic vehicle behavior.” But the human driver was not watching the road; her eyes were on the vehicle’s control screen when the Volvo struck Herzberg at 43 mph, according to the NTSB. Even though the self-driving system determined that an emergency braking maneuver was necessary 1.3 seconds before impact, the system was not programmed to alert the car’s operator.

A relatively minor mishap suffered by a semi-autonomous shuttle bus in November 2017 illustrates another way things can go haywire when autonomous vehicles interact with humans.

An experimental shuttle sponsored by the AAA auto club ran a loop of less than one mile in downtown Las Vegas. An operator was stationed on board and had been issued a hand-held controller to use in emergencies.

But during the first hour of operation on the day robot shuttle bus debuted, a semi-truck bumped into it.

The controller was in a closed compartment when the tractor-trailer crossed the shuttle’s path as it backed into an alley, according to a NTSB report released last month. The driver of the truck assumed the shuttle bus would stop at a “reasonable distance.”

The operator waved frantically at the driver to signal him to stop backing up and finally retrieved the controller and pushed the emergency stop. The bus, made by the French manufacturer Navya, was already slowing down as it followed its programming to stop exactly 9.8 feet from any obstacle, the NTSB report says. That was too close. The truck driver continued to back up until the cab of his vehicle brushed against the shuttle, damaging a plastic panel but nothing else.

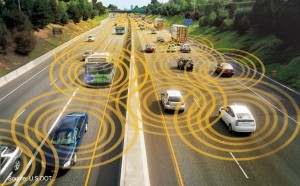

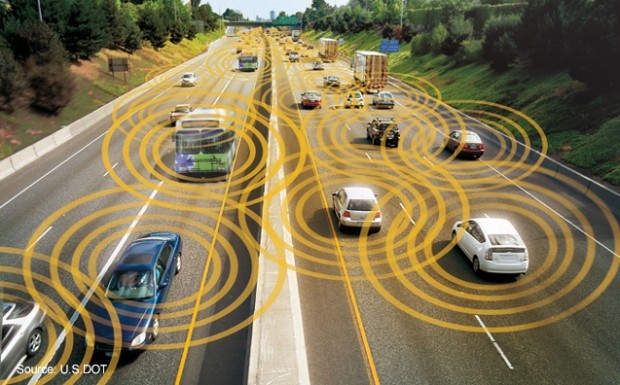

Crashing into people and things is not the only peril that autonomy may bring. With any sophisticated electronic system, there’s always a chance of cyberattack.

A 2016 Wired magazine article featured a demonstration by researchers for the University of Michigan’s Transportation Research Institute to use a laptop to hijack the controls of a big rig truck. With a tap on the keyboard, the researchers caused the truck to speed up. They were also able to take over the truck’s instrument panel to display false readings.

The researchers told Wired that all commercial trucks use the same Society of Automotive Engineers Standard for communication within their electronic systems, so a hacker who finds his way into one vehicle can get into them all. The researchers took two months to design a hack as part of a class assignment.

That points to a potential nightmare if one technology emerges as some have predicted: Interconnected fleets of autonomous semi-trucks prowling the Interstate highway system driving in bumper-to-bumper convoys to save energy by reducing draft.

GuardKnox Cyber Technologies says it is ready for that threat. The Israel-based firm has adopted a cybersecurity system used to protect fighter jets.

Chief Executive Officer Moshe Shlisel said GuardKnox’s technology is designed to protect computers that are not continuously connected to the Internet, which means they can’t rely on electronic signatures to authenticate that commands are authorized.

Shlisel said GuardKnox controls every bit of data that passes through a vehicle’s computer system to prevent any malicious messages. He said in contrast, most cybersecurity systems uses encryption to detect intruders.

He said the product can be sold as an “aftermarket” solution, meaning it can be installed in existing fleets. It’s a big market: Moshe said there are 34 million commercial vehicles in the United States.

Shlisel said that many commercial trucking companies have connected their fleets with telematics to monitor driver safety and vehicle locations and manner of operation. He said those fleets are already at risk for hackers who don’t have to wait for full autonomy to make mischief.

“No one is interested in publicizing this,” he said. “Fleets wouldn’t admit that they have any problems.”

Shlisel said the potential market for GuardKnox will grow as manufacturers deploy increasingly automated vehicles. He said all new vehicles already have SIM cards, which are the main communications component in cell phones.

But Shlisel said he doesn’t expect fully autonomous vehicles to be in wide use for at least 15 years. The public is not ready for it.

“Airplanes can take off and land by themselves today,” he said. “You would not get on an airplane to go across the Atlantic without a pilot.”

*This story appeared previously in our sister publication Claims Journal.

Your Tech Stack Is Your Recruiting Strategy (Whether You Know It or Not)

Your Tech Stack Is Your Recruiting Strategy (Whether You Know It or Not)  Multimillion-Dollar Insurance Fraud Results in 4 Arrests

Multimillion-Dollar Insurance Fraud Results in 4 Arrests  Exclude It, Harness It, Get Greedy: McGavick’s Take on Insurers’ AI Playbook

Exclude It, Harness It, Get Greedy: McGavick’s Take on Insurers’ AI Playbook  AI Poised to Tilt Job Market Leverage Toward Older Workers

AI Poised to Tilt Job Market Leverage Toward Older Workers