The market for privately written inland flood insurance in the United States is growing rapidly, but flood modeling is still in its infancy. As a result, underwriters need to be aware of the differences that exist among commercial flood models, experts agree.

Executive Summary

Insurers have to use U.S. flood models if they want to start writing private flood risk, but the models are in the early stages of development and output can vary. As a result, underwriters need a deep understanding of how the models work and their limitations.“Flood models are hugely important in making private flood viable and making it possible to do a proper job of setting rates,” said Nancy Watkins, principal and consulting actuary at actuarial and consulting firm Milliman, who is based in the company’s San Francisco office.

“Insurers have to use these models if they want to start writing private flood, measuring their risk or even evaluating it,” she emphasized. “But they need to be cautious consumers of the information.”

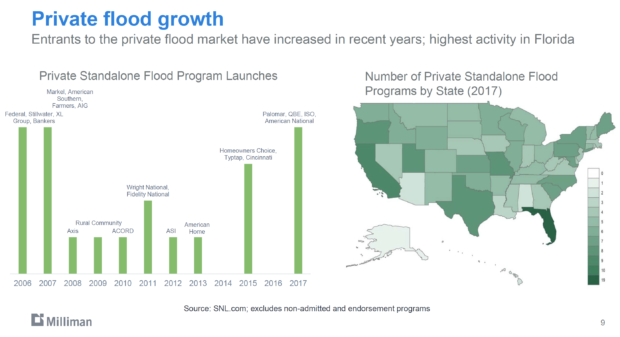

The U.S. market for privately written flood insurance grew by 51.2 percent in 2017, according to figures compiled by S&P Global Market Intelligence, which noted that state-level markets are growing more competitive and less profitable.

As insurers and reinsurers begin to put their toes in the water of U.S. flood risk, they need to swim carefully because U.S. flood models are relatively new—having been rolled out within the last five years—and results can vary widely.

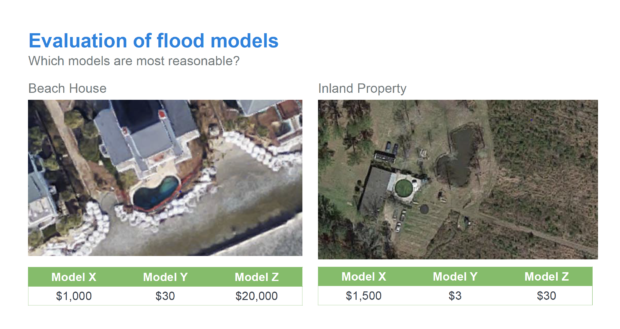

To demonstrate model variances, Watkins cited an actual example of a house, located adjacent to the beach, with a swimming pool, protected against the ocean only by a sea wall. In a portfolio analysis conducted for a client, Milliman examined the output from three different models. One model gave the property an average annual loss (AAL) of about $1,000, another model gave it an AAL of $30 and the third model gave it an AAL of $20,000.

She cited another example of inland flood results, where a Milliman analysis revealed anomalies among the results of three models: an AAL of $1,500 for one, $3 for another, and $30 for the third.

“What would you charge the premium for a house where your model tells you the average loss is $1,500? Your flood insurance premium would have to be at least $3,000,” Watkins said. “On the other hand, the second model tells you your average premium should be negligible, because the expected loss is only $3.”

She explained that these “very divergent results” are typical of what happens with commercially available flood models for both coastal and inland flood risks. (See flood graphic below.)

Federico Waisman, head of Analytics at Ariel Re, which is part of Argo Global, noted that his company conducts regular analyses or comparisons of potential losses calculated by commercial flood models—using the same conditions, locations, number of properties, values and exposures for each.

Federico Waisman, head of Analytics at Ariel Re, which is part of Argo Global, noted that his company conducts regular analyses or comparisons of potential losses calculated by commercial flood models—using the same conditions, locations, number of properties, values and exposures for each.

“There are many similarities between the models, but there are a lot of differences,” he noted. Late last year, Waisman gave a presentation when he described some of the differences in the flood models of four modeling firms.)

For example, in one analysis, the models revealed different annual frequencies for expected U.S. inland flood events. AIR had the highest tally at 69 events per year, followed by KatRisk at 36, CoreLogic at 12 and Impact Forecasting at 10.

In another example, Waisman asked the modeling companies to provide ground elevation for 1.2 million locations, which revealed differences of three to 30 feet. “That’s a difference between being completely flooded and not flooded at all.”

Other differences were found in modeled losses for 1-in-100-year events—with models differing by a factor of two for single events and by a factor of three for aggregate losses modeled at the 1-in-100-year level. Waisman also observed that when looking at results by region, the differences between the models increase.

Challenge of Limited Data

A major challenge to modeling flood is that vendors are working with limited data.

“Given that the primary flood market in the U.S. is just starting to grow, the availability of detailed event loss data to calibrate the model is not as extensive as for hurricanes,” said KatRisk, the Berkeley, Calif.-based modeling firm, in an emailed statement.

In addition, the geographical gradient between low and high risk is very steep and requires modeling at high resolution, said KatRisk, which was founded in 2012. It released its U.S. probabilistic flood model in 2017.

While some data exists from the U.S. National Flood Insurance Program (NFIP), individual policy-by-policy losses have not been made public, said Waisman in an interview with Carrier Management.

Further, flood is a very difficult peril to model—especially U.S. flood, which is extremely challenging because of the sheer amount of data, said Waisman. “It’s not only a very vast country, but there are so many climates or drivers of flooding,” such as wind, coastal surges and precipitation flooding from hurricanes, torrential rains in California and snow melt in the Colorado, to name just a few of the variables.

Seasonality—the distribution of events throughout the year—is handled differently by modelers, he said, noting that some models may not incorporate the different U.S. weather patterns.

Waisman affirmed that some models have a peak of events in a particular period, like in the summer, while others show constant seasonality across the regions. “As a result, you can see that maybe the model is not sophisticated enough to pick up on the very different weather patterns between California and the Southeast Coast of the U.S.”

Underwriters need to consider such differences when gauging the model’s overall value and accuracy, he said, because seasonality is an important aspect of correlation of risks—whether several regions will get hit in one month and how many will occur in the year.

Underwriting Flood Risk

What can underwriters and risk managers do about model discrepancies?

Watkins said that Milliman works with clients that are entering the flood risk market—first by running a portfolio of risks through a model, either using the company’s dataset or a market basket of exposures that are representative of the flood risks in the state or region.

“Starting with a fairly robust dataset, we’d run them through the model, and then we would assess them for reasonability,” she said. “We look for discontinuities. We look for results that are just illogical.”

For example, “we would look for low losses in high-risk areas, or alternatively, very high losses in low-risk areas,” Watkins said, explaining that such discontinuities can be evidence of something going on inside the model that is geographically inaccurate.

“We also look at whether the models have all of the secondary modifiers that reflect important risk characteristics, to see if the model is paying attention to them because there are a lot of contributors to flood risk,” Watkins explained. These modifiers would include whether the home has a basement, which is riskier than a home without a basement, as well as the home’s relative elevation, which determines how high the house is in relation to the land around it.

“You need to make sure they have all the elements of flooding that you’re going to have to pay for if you’re an insurance company,” she went on to say.

“There’s typically a component that’s non-modeled, and if that’s the case, you need to know what it is, and you need to figure out how you’re going to price for it—whether you would just put in a minimum premium, whether you need to come up with other data from another place or maybe use another model,” Watkins said.

Flood is a very difficult peril to model, especially U.S. flood, which is extremely challenging because of the sheer amount of data.

When conducting such an analysis, Milliman takes the data needed to price a risk, or to model a risk, and appends GIS (geographic information systems) variables. These variables should have relationships to risk, like the distance of the property to the coast, river or ocean; whether the house is on flat land where water could pool or a hillside where torrential rain will run down the slope.

Waisman said underwriters and risk managers often will benchmark vendor models against historical loss data and will judge them against their own expectations of what they think, relatively speaking, the risks could be. “You must calibrate against historical experience, both on the hazard side and on the loss side, if this information is available.”

Underwriters can use stream gauge data from the United States Geological Survey (USGS), as an example, to benchmark past events against the models, he suggested. If there’s a big range of numbers, “you can use history to figure out what the actual number should be and figure out which of these vendors you think is the most accurate one.”

Of course, the underwriter needs to know the underlying perils, weather patterns, and seasonality of rain and snow, etc., to then be able to judge a model’s accuracy, Waisman continued.

However, experienced flood risk underwriters should be able to take the output from the model and come to a decision about which of the vendors have models that are good enough to be able to use, which of the models they feel need a lot of modifications and which ones they may not use at all, he said.

Underwriters need to understand where the models work and where they don’t—what things they cover and what they don’t cover, Waisman continued. “They need to be thoroughly aware of the risks and assumptions about them.”

Watkins said when insurers are designing their underwriting rules and their rating plans, they should consider how much risk they’re intending to take and what they understand about the risk, based on their knowledge of the local geography. Then they need to compare that information with the model’s numbers and determine whether they intuitively make sense, she continued.

And in areas where it’s just a total question mark, maybe that’s a place where you want to build in a higher risk load, charge a higher minimum premium or underwrite to leave those policies out entirely, Watkins explained. “If you’re entering the flood market, you don’t have to write everywhere. You can put on an amount of risk that you’re comfortable taking on and start watching how the models perform after an event.”

When insurers enter the U.S. flood market, sometimes they will rely on the expertise of their reinsurers. That’s why Hiscox Re & ILS launched a new U.S. personal lines flood product late last year, called FloodXtra, which aims to help its insurer partners provide their customers with more affordable flood insurance, thereby helping to fill the flood insurance gap.

“The birth of flood models in the last four or five years has enabled Hiscox and other carriers to get into the market, but the models are not yet at the point where you can treat them as a black box and take them straight off the shelf,” said Katy Sivyer, underwriter for the North America and Caribbean at Hiscox Re & ILS.

She emphasized that Hiscox Re & ILS has done the legwork: The company spent the last four years getting comfortable with the analytics of U.S. flood models and developing a deep understanding of the flood peril. With the FloodXtra product, Hiscox does a bit of hand-holding for insurers that are new to the market by offering quota-share arrangements and all the latest analytic technology and flood modeling.

Watkins said Milliman typically performs analyses of models for insurance companies and sometimes for its own research. “We’ve asked the modelers, ‘Can you run our market basket so we can kick the tires?’ So if somebody asks us about it, we’ll know how the model works.”

If a client prefers a particular model, Milliman will use it but will build in variables for the portion of the loss that it’s not modeling, said Watkins, noting that the company has been working in flood models for about three or four years, comparing models as part of market-feasibility studies for companies thinking about getting into flood risks. She said Milliman also has done work comparing models for the NFIP.

“We provide information about a model and what its limitations are, what it’s strengths are, and what needs to happen to shore up the limitations.”

If, for example, the analysis flags an AAL problem for just one house, “we would probably determine that the insurer’s rates aren’t wrong,” she said. However, if there is a pattern of “funny numbers” that could throw off the results in a material way, then that must be considered, she added.

Watkins said there are many reasons results can be wrong or misleading. “We need to understand what we’re looking at in order to know what we can do with it.”

Commenting on what insurers and reinsurers should do to assess risk when model outputs vary, Peter Bingenheimer, senior vice president at Boston-based AIR Worldwide, said: “We strongly encourage carriers to understand the model underpinnings and how the model was validated. It’s also important to consider the quality of the exposure data being input into the model, including location accuracy and building details, such as the presence or absence of a basement, first floor height, floor of interest, and location of critical services and fixtures. Finally, as with all models, modeled losses for flood should be used as one of multiple inputs into risk management decisions. Information such as flood zone, elevation and distance to body of water can offer additional insights.” (AIR’s inland flood model was released in 2014.)

KatRisk recommends that underwriters fully understand what is included and not included as well as model development methodologies. Some examples are:

- Is tropical cyclone rainfall included in the model?

- What is the resolution of the model? Can flood maps be visualized in order to determine the reasonableness of flood patterns?

- Has hydraulic modeling been performed everywhere, or have simplified statistical models been utilized in some areas (particularly important in modeling non-riverine surface runoff flooding)?

- How many events per year are modeled, and how does that compare to the historical record?

- What vulnerability assumptions have been made relative to the first-floor elevation of buildings?

- Is the runtime of the model fast enough such that sensitivity analyses can be efficiently performed?

Does Blending Work?

Sometimes insurers will decide to blend (or average) model results, which needs to be done with care and a good understanding of the underlying risk.

Dan Dick, head of Aon Benfield’s catastrophe management team, said averaging or weighting the modeled results can often serve as the most sufficient view of risk.

“The modeled results serve as one data point that informs the buying decision. When modeled results differ, Aon Benfield works with the buyer to understand which model they may want to give preference to, based on which model may best reflect their view of risk,” he said. “All models have strengths and weaknesses, so differing results actually provide an opportunity to better understand these.”

When asked the question about blending models, Watkins said that blending can help but has limitations. She cited her analysis of three models—one that came up with an AAL of $1,000 for a beachfront property while a second one had an AAL of $30 and a third had an AAL of $20,000. “Averaging two models would get you a completely different result than averaging the other two models or averaging all three models.”

If you take the first two numbers—the $1,000 and the $30—and you calculate a 50/50 blend, that gives an AAL of $515, she said. On the other hand, Watkins added, if you take a 50/50 blend of the second model result of $30 and the third model result of $20,000, that creates an AAL of $10,015.

“Which one of those numbers is good? In my opinion, for a house right on the beach with a swimming pool, which looks pretty big and nice, an AAL of $30 is the outlier. That’s the number that doesn’t make sense. And an outlier can have a huge impact on the average.”

Although she is not opposed to blending, she cautions it won’t take care of all your problems with models. “You still need to understand the underlying risk. You need to understand that there’s one model that’s an outlier from the other two models and how important it is [to accurate underwriting].”

“For me, blending requires that you calibrate, or you correct, each of the models individually for blending to be able to work,” said Waisman.

New Technology

There has been an earthquake risk market and a hurricane risk market for many years, which created the need for vendors to develop models for those risks. Flood models, on the other hand, came on the scene more recently as a result of the opening of the commercial market.

“The only reason we started seeing the development of flood models in the U.S. is because of the potential of NFIP going public and the opening of the market,” said Waisman. “The vendors won’t create a model if they cannot sell licenses. And insurers and reinsurers will not buy a model license if they’re not selling the flood product. It’s a Catch-22.”

While modeling vendors only recently started offering U.S. flood models, they have existed for many years in other parts of the world, such as Germany, Belgium, France and the U.K., as well as Asia.

As time goes by, he affirmed, the data available to feed the models will become more and more granular and accurate, following a similar trajectory to hurricane models, which were first offered in the early 1990s. “These hurricane models have been benchmarked through Hurricanes Andrew, Ike, Sandy, Katrina, Wilma, etc. They have all of that history and have been able to benchmark those losses against the models and calibrate them. The same will happen for the flood models,” Waisman explained.

The models will become more accurate when underwriters, agents and brokers begin asking flood-specific questions. Waisman suggested questions such as: Do you have a basement? What’s the elevation of your first floor? If you’re a commercial building, where do you have your valuables? Which is a floor of business interest? What sorts of measures do you have to defend against flood? Do you have any private flood defenses, such as pumps?

Previously, underwriters have not had the opportunity to ask such questions—but that’s changing. “When the models are developed, you start collecting the data,” Waisman said.

That sentiment was echoed by RMS, the Newark, Calif.-based risk modeling firm. “Because flood exposure information has developed more slowly compared to wind, it is more challenging to develop models that estimate the financial loss to a flooded building,” said RMS in emailed comments.

“For example, the height of the first floor (FFH) relative to the ground is essential for determining the flood depth that will cause damage, but this information is not always directly available to the insurer,” RMS said. “We’ve built intelligence into the model to estimate building attributes like FFH, using other more accessible information about the property such as foundation type, until FFH and other key attributes become more readily available at the underwriting level.”

RMS currently accounts for coastal flood risk in its North Atlantic Hurricane Model. It will be releasing a U.S. Inland Flood Model later this year.

After every major flood event, RMS deploys teams “to collect ground truth” and compare that data to the model. RMS also gathers flood gauge data (e.g., from the USGS), other meteorological data, as well as claims and loss information from the industry as a whole and from individual clients. “All this information is used to improve the modeling methodology and calibrate the model to actual loss experience,” RMS said.

“We’re working with the first generation of U.S. flood models,” said Waisman. As the years go by, new versions will be released, he said, noting that every flood event helps models improve.

Regardless of output and differences in risk assumptions, the flood model vendors Waisman analyzes “have done great work.” He noted: “It’s quite an accomplishment to be able to put together a U.S. flood model. It’s a big investment in resources and money. You don’t turn them around in six months; they’ve been at work on them for a year and a half to two years.”

Progressive Is Biggest Auto Insurer, Surpassing State Farm: S&P GMI

Progressive Is Biggest Auto Insurer, Surpassing State Farm: S&P GMI  Insurance Attorneys Flip $1M Hail Claim into Nearly $2M Suit for Contractor Interference

Insurance Attorneys Flip $1M Hail Claim into Nearly $2M Suit for Contractor Interference  Vendor Issues Remains Top Cause of Paid Wedding Insurance Claims: Travelers

Vendor Issues Remains Top Cause of Paid Wedding Insurance Claims: Travelers  Executive Viewpoint: What Telematics Got Wrong and What It Means for Commercial Auto

Executive Viewpoint: What Telematics Got Wrong and What It Means for Commercial Auto