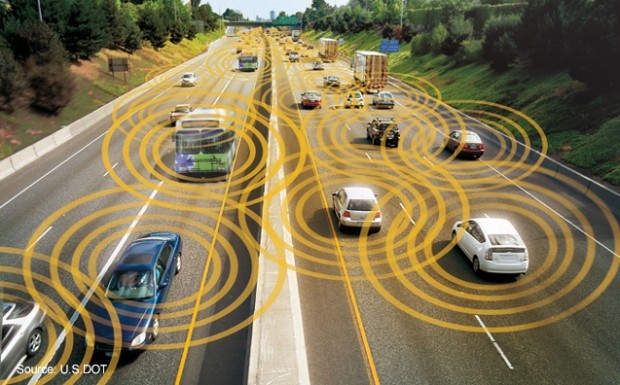

Competitors locked in a cut-throat race to bring fully self-driving cars to American roads are being asked to share experiences with “edge cases,” rare scenarios that pose the most vexing safety challenges. Regulators want them to make vehicle performance assessments public so that all of the companies can learn from the data and enhance safety.

“Highly automated vehicles have great potential to use data sharing to enhance and extend safety benefits,” reads page 18 of the Department of Transportation’s 112-page document. “Thus, each entity should develop a plan for sharing its event reconstruction and other relevant data with other entities.”

That represents a significant shift for automakers and tech companies, who fiercely protect their data and aren’t known for collaboration. While there are commercial business practices and consumer privacy issues to be mindful of, there’s no reason companies shouldn’t share information about dangerous incidents, a senior Transportation Department official told reporters Tuesday.

“People don’t have to make the mistakes their neighbor made,” the official said, on the condition of anonymity. “There’s group learning.”

Traffic Deaths

An estimated 35,200 people were killed in U.S. traffic accidents last year, and self-driving cars are seen as a leap forward that will not only save lives but improve mobility for the elderly and disabled. In drafting the guidelines, regulators have looked to the Federal Aviation Administration as a model. Airline data is shared with a third-party repository system.

But technology companies will probably bristle at having to share data after an accident involving a self-driving car, said Katie Thomson, former senior counsel at the Department of Transportation and FAA and now a partner at the law firm Morrison & Foerster.

“It’s a significant data set,” Thomson said in a phone interview. “That’s where technology companies get territorial.”

The guidelines are voluntary: Regulators are asking, not compelling, companies to share.

‘Huge Value’

“This field is extremely competitive, and data has huge, huge value,” said Jonathan Handel, an attorney with TroyGould who has written about self-driving cars. “Cooperation with the government is not a core value in Silicon Valley. It’s a libertarian environment. This document says ‘we really want you to share your data,’ but they can’t force them to. I don’t think Silicon Valley is going to turn over the keys to the kingdom.”

Google, Tesla and Uber declined to discuss the proposed guidelines, which are expected go into effect after a 60-day public comment period. Uber referred reporters to a statement by the Self-Driving Coalition for Safer Streets, an advocacy group. Uber, Lyft, Google, Ford and Volvo are founding members of the group. David Strickland, the group’s general counsel, is the former head of the National Highway Traffic Safety Administration.

“Our members are supportive of the appropriate transfer of information,” said Strickland during a conference call with journalists Tuesday. When asked about sharing data with competitors, Strickland said the topic was “worthy of a longer discussion.”

Tesla Autopilot

Tesla’s driver-assistance features, which the company calls Autopilot, have been under intense scrutiny in the wake of a fatal crash in Florida May 7. Probes of the accident by NHTSA and the National Transportation Safety Board are ongoing. All Tesla vehicles built since October 2014 — a fleet of more than 90,000 cars worldwide — have Autopilot, which drivers have to actively engage. Tesla vehicles are driving roughly 1.5 million miles on Autopilot each day — a treasure trove of real-world data for the Palo Alto, California-based company.

In the government’s policy document, the DOT said it could seek “pre-market approval” of self-driving technology, which would be a sea change in how new vehicles are regulated. Instead of self-certification, the government could seek the authority to test cars before they hit the roads.

“Pre-market approval means the government will be running these vehicles on test tracks,” said Rick Walawender, head of corporate law and the autonomous vehicle team at law firm Miller Canfield. “NHTSA and the DOT seem pretty enamored with this approach.”

The new guidelines include recommendations for states to pass legislation on introducing self-driving cars safely on their highways. The guidelines say states should continue to license human drivers, enforce traffic laws, inspect vehicles for safety and regulate insurance and liability. The federal government, the guidelines say, should set standards for equipment, including the computers that could potentially take over the driving function. It will also continue to investigate safety defect and enforce recalls–though if software fixes can be rolled out to a fleet, cars won’t physically need to come into a dealership or repair shop.

Also Tuesday, NHTSA released a final enforcement guidance bulletin to clarify how its authority to order recalls will apply to self-driving cars. The agency said semi-autonomous driving systems that fail to adequately account for the possibility that a distracted or inattentive driver might not be able to take over in an emergency could be declared defective and subject to a recall. This scenario is similar to the one that occurred in the fatal May crash involving a Tesla Model S.

“NHTSA is absolutely extending its enforcement authority to all the new autonomous vehicles,” NHTSA administrator Mark Rosekind said. “That’s the bottom line.”

West Coast Chemical Emergencies Raise Questions About Massive Industrial Tank Safety

West Coast Chemical Emergencies Raise Questions About Massive Industrial Tank Safety  Exclude It, Harness It, Get Greedy: McGavick’s Take on Insurers’ AI Playbook

Exclude It, Harness It, Get Greedy: McGavick’s Take on Insurers’ AI Playbook  The Financial Case for Negotiation: How Indemnity Discipline Can Transform Carrier Economics

The Financial Case for Negotiation: How Indemnity Discipline Can Transform Carrier Economics  From ‘FBI Claims Handling’ to AI-Assisted Workflows

From ‘FBI Claims Handling’ to AI-Assisted Workflows