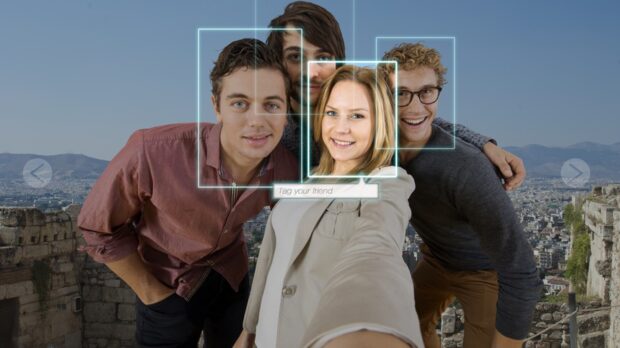

Facial recognition startup Clearview AI has agreed to restrict the use of its massive collection of face images to settle allegations that it collected people’s photos without their consent.

The company in a legal filing Monday agreed to permanently stop selling access to its face database to private businesses or individuals around the U.S., putting a limit on what it can do with its ever-growing trove of billions of images pulled from social media and elsewhere on the Internet.

The settlement—which must be approved by a county judge in Chicago—will end a two-year-old lawsuit brought by the American Civil Liberties Union and other groups over alleged violations of an Illinois digital privacy law. The company still faces a separate privacy case before a federal judge in Illinois.

Clearview is also agreeing to stop making its database available to Illinois state government and local police departments for five years. The New York-based company will continue offering its services to federal agencies, such as U.S. Immigration and Customs Enforcement, and to other law enforcement agencies and government contractors outside of Illinois.

“This is a huge win,” said Linda Xochitl Tortolero, president of Chicago-based Mujeres Latinas en Accion, which works with survivors of gender-based violence and was a plaintiff in the case along with the ACLU and other groups.

Among the concerns raised by Tortolero’s group was that photos posted on social media sites such as Facebook or Instagram—and turned into a “faceprint” by Clearview—could end up being used by stalkers, ex-partners or predatory companies to track a person’s whereabouts and social activity.

A prominent attorney who was defending Clearview against the lawsuit said the company is “pleased to put this litigation behind it.”

“The settlement does not require any material change in the company’s business model or bar it from any conduct in which it engages at the present time,” said a statement from Floyd Abrams, a lawyer known for taking on high-profile free speech cases.

Abrams noted that the company was already not providing its services to police agencies in Illinois and agreed to the five-year moratorium to “avoid a protracted, costly and distracting legal dispute with the ACLU and others.”

Illinois’ Biometric Information Privacy Act allows consumers to sue companies that don’t get permission before harvesting data such as faces and fingerprints. Another privacy lawsuit over the same Illinois law led Facebook last year to agree to pay $650 million to settle allegations it used photo face-tagging and other biometric data without the permission of its users.

“It shows we can fight these companies when they’re taking these kinds of actions,” Tortolero said of the Clearview settlement. “It also highlights the fact that there are many ways that social media—and the technology companies that collect this kind of information—can be harmful to Americans.”

The settlement document says Clearview continues to deny and dispute the claims brought by the ACLU and other plaintiffs. But even before Monday’s settlement, the case has been curtailing some of the company’s controversial business practices.

Clearview AI co-founder and CEO Hoan Ton-That told The Associated Press in April that the company was preparing to launch a new “consent-based” business product to compete with the likes of Amazon and Microsoft in verifying people’s identity using facial recognition.

The new venture would use Clearview’s algorithms to verify a person’s face but would not involve its trove of some 20 billion images, which Ton-That said is now reserved for law enforcement use. That’s a shift from earlier in Clearview’s business history when it had pitched the technology for a variety of commercial uses.

Regulators from Australia to Canada, France and Italy have taken measures to try to stop Clearview from pulling people’s faces into its facial recognition engine without their consent. So have tech giants such as Google and Facebook. A group of U.S. lawmakers earlier this year warned that “Clearview AI’s technology could eliminate public anonymity in the United States.”

While Monday’s settlement “reins in Clearview’s practices significantly,” it should not end scrutiny of the company by Congress, state legislatures and regulators, said Nathan Freed Wessler, deputy director of ACLU’s speech, privacy and technology project. Much of the strength of Clearview’s artificial intelligence technology—now a selling point for police and other uses—is that it was able to “learn” from all of the faces it scanned across the publicly accessible Internet.

“This company’s approach was effectively a Silicon Valley mentality of let’s break things first and then figure out how to clean up the mess later in order to try to make a profit,” Wessler said. “They broke through a very strong taboo that had kept big tech companies like Google and others from building the same product that they had the technological capability to do.”

Details TBD: Berkshire’s Jain, Abel Describe Tokio Marine Strategic Pact

Details TBD: Berkshire’s Jain, Abel Describe Tokio Marine Strategic Pact  Balancing Authenticity and Compliance in Creator-Led Insurance Marketing

Balancing Authenticity and Compliance in Creator-Led Insurance Marketing  Independent Agents Can Get Appointed to Sell Root Auto Insurance in One Day

Independent Agents Can Get Appointed to Sell Root Auto Insurance in One Day  Lemonade Logs Q1 Net Loss With Topline Growth

Lemonade Logs Q1 Net Loss With Topline Growth