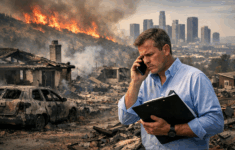

The new year is ushering in change with respect to catastrophe risk management. New tools and technology are available, providing insurance companies with:

Executive Summary

Insurance companies have been challenged in the past to develop consistent risk management strategies—using opaque catastrophe models producing output that changes frequently and significantly. The situation is now changing, according to Karen Clark.For the past two decades, insurance companies have relied on catastrophe models for their risk management infrastructure, building important pricing and underwriting processes around the models and the model output. While the models are very valuable tools, they have certain shortcomings, such as a lack of transparency. Newer technologies provide more sophisticated, open, and robust platforms for catastrophe risk management.